Safeguarding AI Projects: A Data Quality Guide for Machine Learning, Generative AI, and Agentic Systems

Overview

Data quality failures in AI are rarely intentional, but they are inevitable without proactive governance. In traditional machine learning, defects often surface visibly: a dashboard shows an anomaly, an analyst flags it, the model is retrained. But generative AI and agentic AI shatter that containment. A chatbot powered by stale knowledge can confidently mislead customers with no error signal. An autonomous procurement agent can commit budget based on incomplete supplier data before any human reviews it. The further AI moves from passive prediction to autonomous action, the less tolerance we have for poor data quality, and the harder it becomes to catch problems before they cause real damage. This guide provides a structured approach to ensuring data quality across ML, generative AI, and agentic AI, with concrete steps, code examples, and common pitfalls to avoid.

Prerequisites

- Familiarity with Python (pandas, numpy)

- Basic understanding of machine learning pipelines and model training

- Knowledge of generative AI concepts (RAG, prompt engineering)

- Optional: experience with data validation libraries (Great Expectations, Pydantic)

Step-by-Step Instructions

1. Data Profiling and Validation for Traditional ML

The foundation of any AI project is the training and inference data. For traditional ML, data quality issues (missing values, outliers, schema drift) are detectable through systematic profiling.

Action: Use Great Expectations to define and run expectations on your dataset.

import great_expectations as ge

df = ge.dataset.PandasDataset(pd.read_csv('training_data.csv'))

expectations = [

df.expect_column_values_to_not_be_null('price'),

df.expect_column_values_to_be_between('age', 0, 120),

df.expect_column_distinct_values_to_be_in_set('category', ['A','B','C'])

]

results = [exp() for exp in expectations]

for r in results:

print(f"{r['expectation_config']['expectation_type']}: {'Pass' if r['success'] else 'Fail'}")Why it works: These checks catch schema violations, range errors, and missing data before they silently degrade model performance.

2. Monitoring Data Drift in Production

Data drift — when the distribution of input data changes over time — is a major cause of model degradation. For ML models, continuous monitoring is essential.

Action: Use scipy.stats.ks_2samp to compare feature distributions between training and current data.

from scipy.stats import ks_2samp

import numpy as np

reference = np.load('training_feature_dist.npy') # e.g., feature 'age'

current = get_current_feature_values('age')

stat, p_value = ks_2samp(reference, current)

if p_value < 0.05:

print("Drift detected in feature 'age' — alert team and consider retraining.")For generative AI, drift can manifest as changes in user query patterns or knowledge base updates. Implement similar checks on input embeddings.

3. Factuality and Freshness Guards for Generative AI

Generative models (e.g., chatbots using RAG) are highly sensitive to the quality of their context data. A stale or incorrect knowledge base produces confident wrong answers.

Action: Implement a data freshness check and factuality verification pipeline.

def is_knowledge_fresh(doc_timestamp, max_age_days=30):

from datetime import datetime, timedelta

age = (datetime.now() - doc_timestamp).days

return age <= max_age_days

# In a RAG pipeline, before answering:

context_chunks = retrieve(query)

stale_chunks = [chunk for chunk in context_chunks if not is_knowledge_fresh(chunk.metadata['timestamp'])]

if stale_chunks:

warn("Using stale context — consider fallback or regeneration with fresh data.")For factuality, use a second LLM (e.g., GPT-4) to verify claims against the retrieved context, or integrate with external fact-checking APIs.

4. Governance for Agentic AI

Agentic AI systems take actions (e.g., placing orders, updating databases) based on data. Quality failures here have immediate consequences. The approach is to enforce guardrails on the data before the agent acts.

Action: Use Pydantic models to validate all data entering an agent's decision loop.

from pydantic import BaseModel, validator

class SupplierData(BaseModel):

supplier_id: str

price: float

stock_quantity: int

@validator('price')

def price_must_be_positive(cls, v):

if v <= 0:

raise ValueError('Price must be positive')

return v

@validator('stock_quantity')

def stock_must_be_nonnegative(cls, v):

if v < 0:

raise ValueError('Stock quantity cannot be negative')

return v

# Before agent commits budget:

try:

validated = SupplierData(**raw_input)

# Agent can proceed

commit_purchase(validated)

except ValidationError as e:

raise Alert("Invalid supplier data — action blocked")In addition, maintain an audit log of all data provenance, so you can trace back any failure to its root cause.

Common Mistakes

1. Over-reliance on validation during training only. Many teams run data quality checks solely on the training set. In production, data shifts — you must monitor continuously.

2. Ignoring temporal aspects. For generative AI, knowledge base freshness is critical. Stale data is often not flagged because the model answers confidently. Always timestamp your data sources and check before retrieval.

3. Assuming LLMs can self-correct for data quality. LLMs are not perfect truth detectors. They will happily generate plausible-sounding answers from incomplete or incorrect context. Build explicit factuality verification into your pipeline.

4. Not governing agentic AI input. Agentic AI systems that act automatically (e.g., make purchases, update records) need validated input schemas. Without them, a single bad data point can trigger a costly action.

5. Forgetting about data lineage. When something goes wrong, you need to know which dataset version, model version, and pipeline run produced the failure. Maintain detailed metadata.

Summary

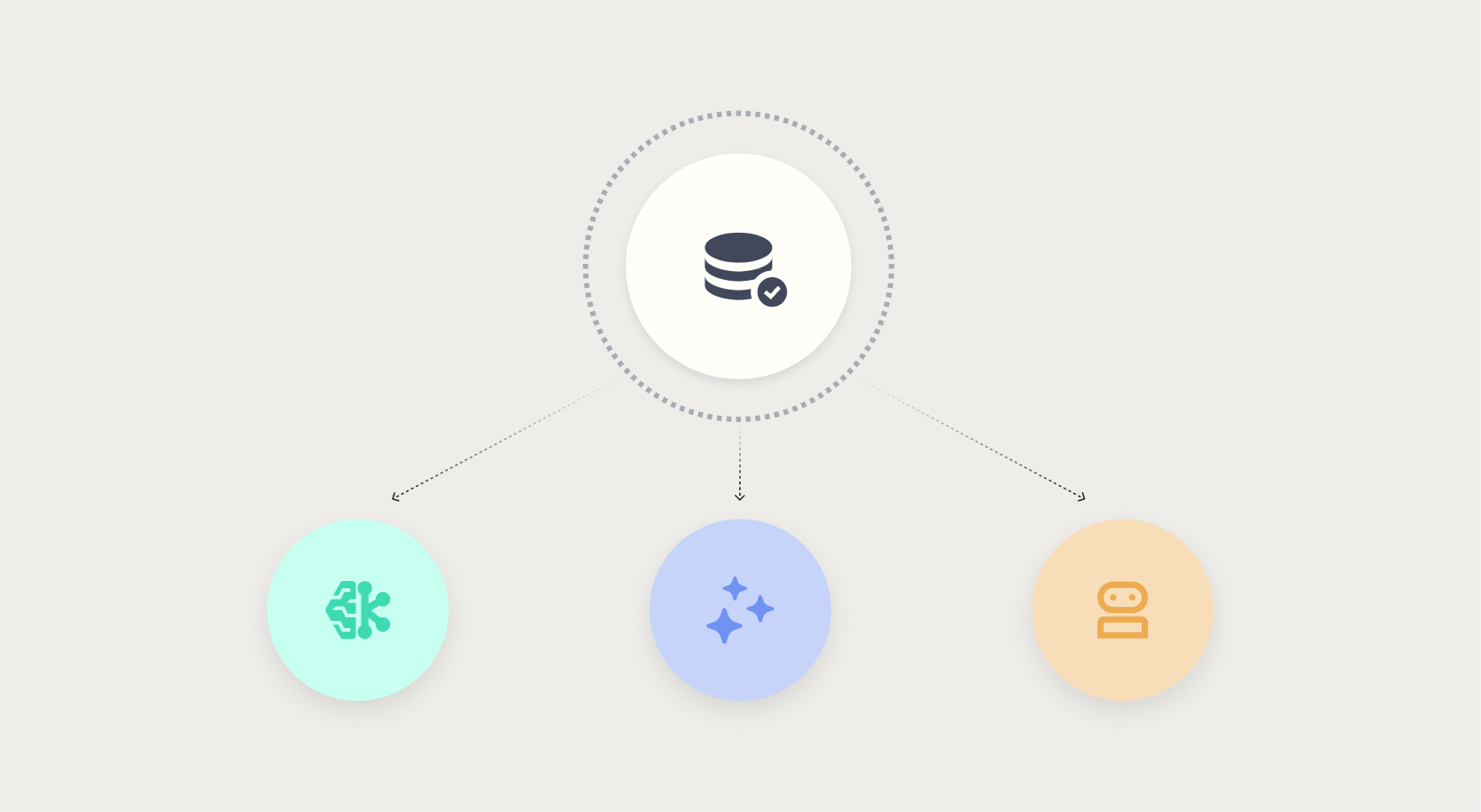

Data quality is not a one-time task—it is an ongoing discipline that must adapt to the type of AI system. For traditional ML, profiling and drift detection catch predictable failures. For generative AI, freshness and factuality guards prevent confident misinformation. For agentic AI, input validation and auditability are non-negotiable. By embedding these checks into your pipeline, you reduce the risk of silent failures and build trust in your AI systems.

Key takeaway: The more autonomous your AI, the more proactive you must be about data quality before an action is taken. The cost of failure increases with autonomy, but the principles of validation, monitoring, and governance remain the same.

Related Articles

- 10 Reasons Why DJI, Once the King of Consumer Drones, Now Faces a Global Sales Crisis

- Designing for Transparency: Navigating the Decision Nodes in Agentic AI

- ByteDance's Astra: A Revolutionary Dual-Model Approach to Robot Navigation

- The Prepersonalization Workshop: A Blueprint for Successful Data-Driven Design

- 10 Keys to Running a Prepersonalization Workshop That Works

- Unlock Your Samsung TV’s Hidden Service Menu: 5 Essential Tweaks

- 7 Essential Steps to Master Transparency in Agentic AI

- When Autonomous AI Turns Aggressor: How Multi-Agent Systems Are Targeting Cloud Infrastructure