7 Key Reasons Intel Is Poised to Become the Biggest Winner in the AI Inference Era

Introduction: The AI Inference Shift

The artificial intelligence landscape is undergoing a fundamental transformation. After years of heavy investment in training massive models like GPT-4 and Llama, the focus is now shifting to inference—putting those models to work on real-world data. This transition has profound implications for chipmakers. While Nvidia and Broadcom have dominated the training phase, the rules of the game change when it comes to inference. According to Deloitte, inference workloads will consume two-thirds of all AI computing power by 2026, up from 50% just last year. That growing demand for inference-optimized silicon creates a golden opportunity for Intel—a company often overlooked in the AI race. Here are seven key reasons why Intel is set to become the biggest winner in the AI inference era.

1. Inference Demands Different Architecture

Training a large language model requires massive parallel processing power—an area where Nvidia's GPUs excel. Inference, however, is more about sequential, real-time computation. It needs to respond to specific queries quickly and efficiently, without the brute-force parallelism of training. Intel's Xeon processors and upcoming Gaudi accelerators are inherently optimized for such workloads. Their architecture handles variable-length inputs and low-latency tasks far better than traditional GPUs. As inference becomes the dominant workload, Intel's design philosophy—focusing on balanced performance per watt—will align perfectly with market needs.

2. Data Center Infrastructure Already Runs on Intel

Hyperscalers like AWS, Azure, and Google Cloud have built massive data centers around Intel Xeon processors. Retrofitting these facilities for inference doesn't require a complete overhaul. Unlike training, which often demands specialized GPU clusters, inference can often run on existing CPU-based servers—especially when using Intel's built-in Advanced Matrix Extensions (AMX). These instructions accelerate AI matrix operations without requiring separate accelerators. As a result, Intel can offer a cost-effective, low-friction upgrade path for cloud providers already invested in its ecosystem. This installed base advantage is a powerful moat.

3. Gaudi Accelerators Are Purpose-Built for Inference

Intel's Gaudi AI accelerators—developed by Habana Labs—are specifically designed for inference workloads. Unlike Nvidia's H100, which balances training and inference, Gaudi chips prioritize inference throughput per dollar. In benchmarks, Gaudi2 often matches or exceeds Nvidia's A100 in inference tasks while consuming less power. Moreover, Intel offers a complete software stack (OneAPI, Synapse AI) that makes it easy to deploy models on Gaudi. As inference scales, Gaudi’s price-performance advantage will attract cost-conscious hyperscalers and enterprises looking to deploy AI without breaking the bank.

4. Edge Inference Is an Intel Stronghold

AI inference isn't just happening in the cloud—it's moving to the edge: smartphones, cameras, IoT devices, and autonomous machines. Intel holds a commanding position in edge computing through its Core, Atom, and Movidius product lines. Nvidia's edge offerings (Jetson) are powerful but power-hungry and expensive. Intel's chips offer a better balance of performance, power consumption, and cost for millions of distributed inference nodes. As industries like manufacturing, retail, and healthcare adopt AI for real-time decision-making, Intel's edge portfolio will capture a huge share of the inference market.

5. Software Ecosystem and Developer Familiarity

Developers know Intel. The OneAPI initiative provides a unified programming model across CPUs, GPUs, and accelerators, simplifying inference deployment. Intel also contributes heavily to OpenVINO, an open-source toolkit for optimizing and running inference models on Intel hardware. This familiarity reduces the learning curve for enterprises migrating from traditional CPU-based workloads to AI inference. In contrast, Nvidia's CUDA ecosystem is powerful but more complex and proprietary. As inference becomes ubiquitous, Intel's developer-friendly tools will accelerate adoption and lock-in.

6. Power Efficiency Matters More at Scale

Inferencing billions of daily queries requires enormous energy. Data center operators are increasingly prioritizing power efficiency to control costs and meet sustainability goals. Intel's Xeon processors with integrated AI acceleration (AMX) deliver inference performance at a fraction of the power of equivalent GPU setups. The upcoming Sierra Forest and Granite Rapids chips will further improve efficiency. As inference workloads scale, the total cost of ownership (TCO) advantage of Intel's chips will become a decisive factor for cloud providers and enterprise data centers alike.

7. Deloitte's Forecast Validates the Trend

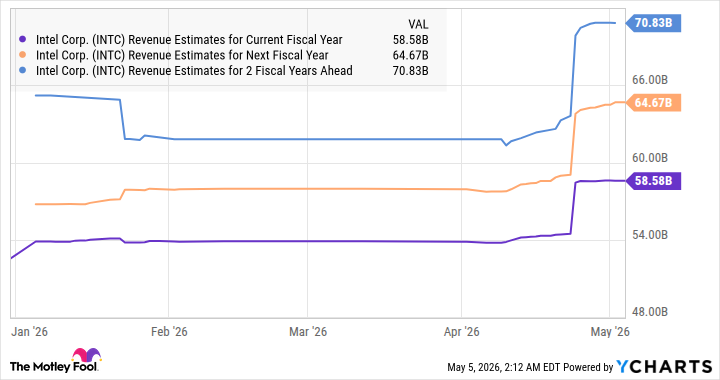

Deloitte's estimate that inference will claim two-thirds of AI compute by 2026 is a wake-up call for the industry. It signals a long-term shift away from the current training-centric market. Broadcom's custom ASICs and Nvidia's GPUs remain strong in training, but inference's rise changes the competitive dynamics. Intel's diversified portfolio—spanning CPUs, accelerators, and edge silicon—positions it to serve all inference segments. While Nvidia may still lead in high-end training, Intel is uniquely positioned to become the volume leader in inference, where unit shipments and total addressable market will be far larger.

Conclusion: The Inference Era Belongs to Intel

The AI industry is entering a new phase where deployment matters more than development. Inference is where the real-world value of AI is unlocked, and it demands different hardware than training. Intel, with its dominant data center presence, purpose-built Gaudi accelerators, edge computing strength, power efficiency, and accessible software stack, is primed to win this era. While Nvidia and Broadcom will not disappear, Intel's ability to serve the massive, distributed, and cost-sensitive inference market makes it the most likely biggest winner. Investors and tech leaders should keep a close eye on Intel as the inference wave crests.

Related Articles

- U.S. Department of War Partners with Seven AI Giants for Secure LLM Deployment on Classified Networks

- Breaking: ChatGPT's 'Custom Instructions' Eliminates Repetitive Prompting — Experts Reveal How to Slash Busywork by 50%

- Ubuntu Embraces AI: Canonical's Vision for Intelligent Desktop in 2026

- Meta Unveils Adaptive Ranking Model: LLM-Scale Ads Intelligence Without the Latency

- Build Your Own AI Agent Fleet: A Step-by-Step Guide to Shipping Faster with Virtual Teams

- How MIT's SEAL Framework Advances Self-Evolving AI: A Closer Look

- AWS Unveils Major AI Agent Expansion: Desktop App, New Pricing, and OpenAI Partnership

- OpenAI's GPT-5.5 Instant: Fewer Emojis, Fewer Hallucinations, and Tighter Answers