GitHub Unveils Defense-in-Depth Security Framework for AI Agents in CI/CD Pipelines

Breaking: GitHub Debuts Security Architecture for Autonomous AI in CI/CD

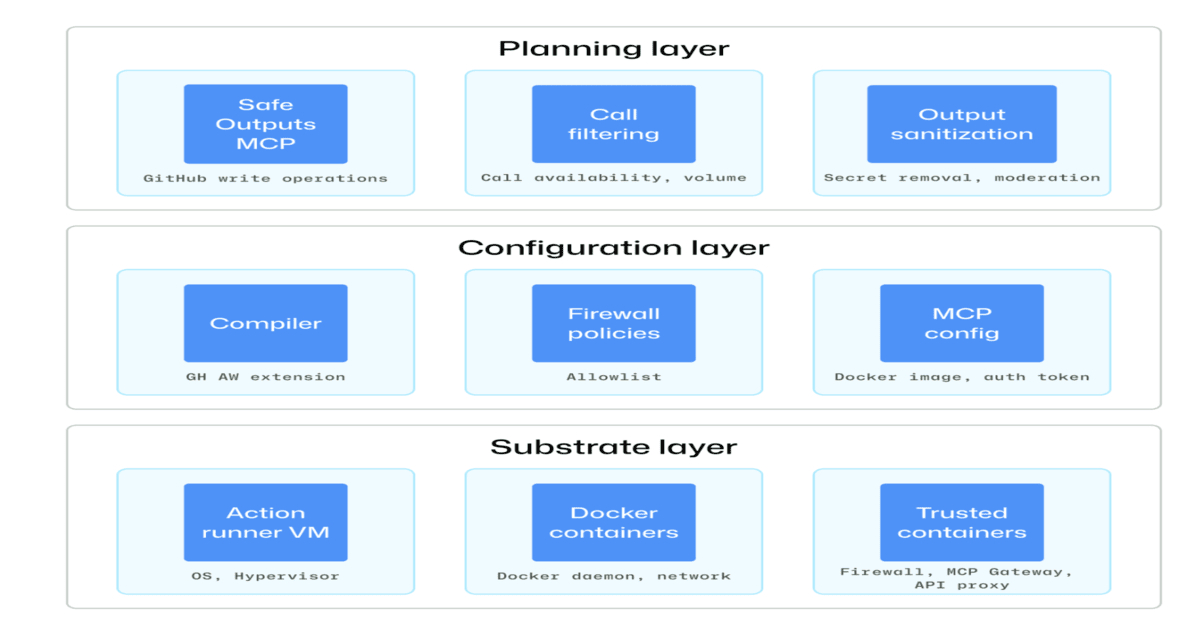

GitHub today released a new security architecture designed to protect CI/CD pipelines from risks posed by autonomous AI agents. The framework, built on defense-in-depth principles, focuses on isolation, constrained execution, and full auditability.

The move comes as developers increasingly integrate agentic workflows—where AI tools autonomously write code, test, and deploy—into continuous integration and delivery systems. Without guardrails, such agents could be exploited via prompt injection, privilege escalation, or unintended actions.

Key Security Measures

The architecture mandates sandboxed environments for each agent, ensuring no cross-contamination between tasks. Permissions are strictly limited to the minimum required for each step, and every action is logged with an immutable audit trail.

'We’re treating AI agents as untrusted actors,' said a GitHub security team lead. 'Even a benign agent can be tricked into catastrophic commands if it has too much access.'

Additionally, execution is constrained by runtime policies that cap resource usage and block dangerous operations, such as outbound network calls or file system writes outside designated paths.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Background

The rise of large language models (LLMs) in dev tools has introduced new attack surfaces. Agentic workflows—where AI not only suggests but also executes code—amplify traditional CI/CD risks like credential theft and supply chain compromise.

Previous incidents, such as the 2023 'Copilot leak' where a prompt injection tricked an AI into revealing secrets, highlighted the need for stronger controls. GitHub’s new framework directly addresses those vulnerabilities.

What This Means

For DevOps teams, this architecture provides a blueprint to safely adopt agentic automation without sacrificing security. It also sets a precedent for other platforms building similar AI capabilities.

'This is a necessary step for the industry,' noted a DevSecOps analyst at Gartner, speaking on background. 'Without these guardrails, we’re one misconfigured agent away from a major breach.'

The framework is available now for GitHub Enterprise customers, with plans to extend it to all tiers later this year.

Related Articles

- XBOW Secures $35M Series C Extension to Expand Autonomous Offensive Security Platform

- Financial Firms Race to Scale AI as Adoption Hits 88% – But Most Pilots Never Reach Production

- New 'Prepersonalization' Workshop Aims to Close the Personalization Gap Before It Costs Companies Millions

- ByteDance Unveils Astra: A Breakthrough Dual-Brain System for Robot Navigation

- How to Seize an Enemy Position Using Only Unmanned Systems: A Step-by-Step Guide

- ClawRunr: The Open-Source Java AI Agent for Automated Task Execution – Q&A

- Bionic Devices Face Real-World Reality Check as Users Demand More Than Lab Demos

- How to Build a Talking C-3PO Head: A Modern Take on a Classic Droid